By John Lovett

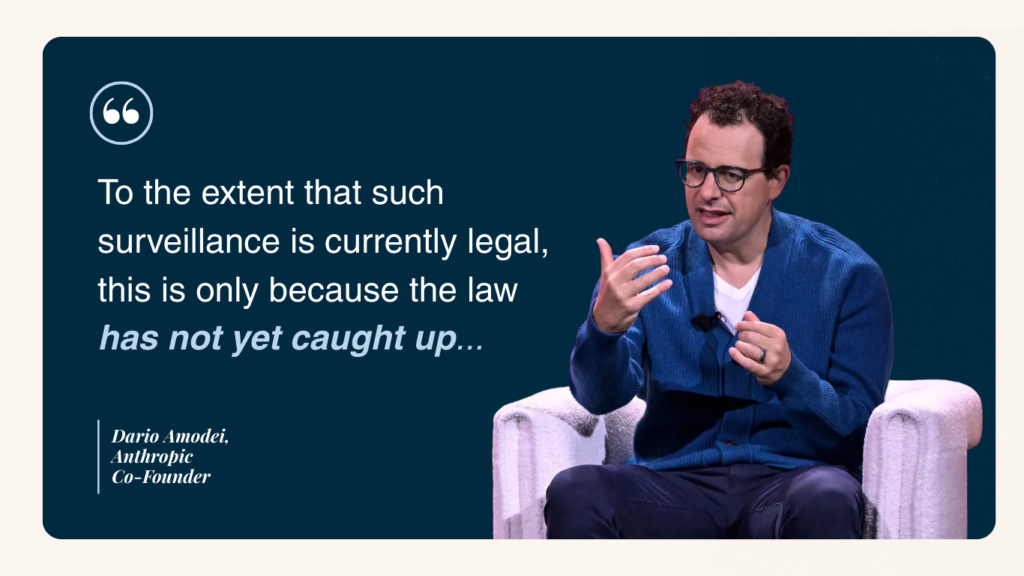

Artificial intelligence (AI) has reshaped nearly every industry. However, with this rapid change, there have been key regulatory questions about its use and what is permissible. AI developer Anthropic and the Department of Defense recently ended negotiations regarding the Department’s use of Anthropic’s Claude tool for analysis, citing Anthropic’s security measures and guarantees that the system would not be used for surveillance. As Anthropic co-founder Dario Amodei noted recently, “To the extent that such surveillance is currently legal, this is only because the law has not yet caught up with the rapidly growing capabilities of AI.” As law continues to catch up to technology, questions about how to regulate AI continue to grow.

AI is rapidly changing many aspects of life, including business. While AI and machine learning are not new, public policy struggles to keep pace with innovations from companies like OpenAI, Anthropic, and various startups. This development raises questions about how to best regulate the technology to prevent misuse. The federal government has not yet passed extensive AI laws, but both the Biden and Trump administrations have established guidelines and working groups. In contrast, state governments have been drafting and passing their own AI regulations.

With 50 different states, the potential for variation in AI policy could mean that regulations could differ significantly across jurisdictions. To this end, a 10-year moratorium on AI legislation at the state level was inserted into the House of Representatives’ version of the July 2024 budget bill. While the moratorium was eventually removed overwhelmingly by the Senate on a 99-1 vote, discussion over state-level AI remains a major part of the broader AI debate. In response, President Donald Trump signed an executive order in December 2025 to preempt state laws on AI and begin the process of creating a national framework. This overview looks at federal and state-level AI regulatory measures, and how the government is attempting to oversee this ever-developing technology.

The State of Federal-Level AI Legislation

The US currently lacks comprehensive federal AI regulation. While presidential executive orders and regulatory bodies like NIST have introduced rules, Congress has not passed a comprehensive AI law. Some legislation, such as the TAKE IT DOWN Act, which addresses the nonconsensual sharing of intimate images, including AI-generated ones, impacts AI. However, a broad legislative framework for AI is missing.

The Trump Administration issued several AI-related executive orders, beginning with the AI Action Plan in July 2025. This plan focuses on infrastructure and national security through three pillars: boosting AI innovation, developing AI infrastructure, and exporting American AI to counter foreign efforts. While these broad pillars indicate a policy direction focused on accelerating development, specific regulations and best practices remain unclear. Further, the administration’s December declaration seeking to supersede state AI regulation may eventually produce a long-term framework, though this framework will need to go through Congress to be fully enacted.

While the administration’s efforts set the stage for the broader development of and expansion of AI companies, it does not touch on matters like AI privacy, AI usage, or many other issues that are currently part of the broader debate over how to use AI.

The State of State-Level AI

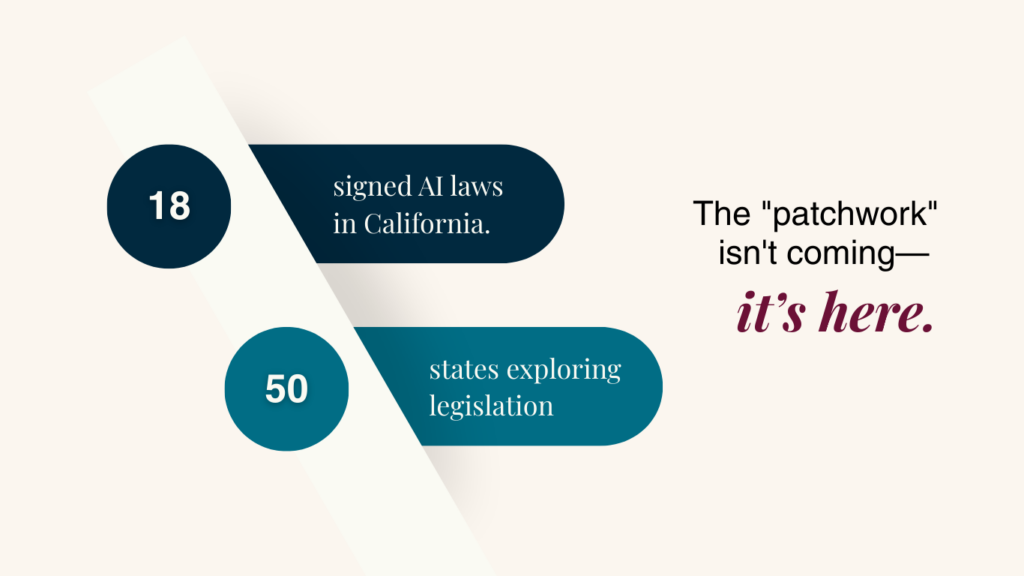

With the lack of concrete federal legislation related to AI regulation, the states have instead taken it upon themselves to pass legislation on AI particularly concerning privacy. There are a variety of examples of AI law in the states. California, for example, enacted 18 AI-related bills, focusing on transparency and privacy, as well as laws on requiring generated content to be watermarked. Most of these laws have been in effect since the beginning of 2025, though the California AI Transparency Act went into effect until January 1, 2026. In addition, California is also in the process of passing AI safety legislation, which has been endorsed by AI company Anthropic, among others.

Other states have followed suit. All 50 states, Washington, D.C., and Puerto Rico have explored AI legislation in 2025, according to the National Council of State Legislatures (NCSL). Topics explored include ownership of AI-generated content, use in critical infrastructure, anti-stalking, and education. The Brookings Institution notes the most likely topics for state engagement are nonconsensual intimate imagery (NCII) and child sexual abuse materials (CSAM), elections, general AI transparency, automated decision-making, and government AI use.

The diverse approaches to AI policy across states, due to federalism, risk creating contradictory or uneven regulations. For instance, state-level contrasts exist in governmental AI use, with Georgia proposing an AI board to oversee how state agencies use AI, while Montana sought to limit use by state and local governments. Outside of government, Illinois has banned AI in therapy, contrasting with other states’ lack of regulation in AI health. This patchwork of policies significantly impacts how and where AI can be deployed.

What This Means for Businesses and Professionals

While there is federal guidance on development of AI, there is little guidance on usage of AI beyond non-consensual or illegal images. The use of AI in tech, other industries, education, healthcare, governmental matters, retail and other industries have been left to the states, with states taking at times similar approaches in some areas and diverging in others. As the technology fully develops, questions will remain on the ramifications of a splintered state of AI regulations.

In policy areas that reach across states and are multi-faceted, one may expect that larger states like California and Texas will have a significant effect on policy due to market pressures. Considering that textbook adoption in schools in other states is heavily focused on regulations in California, Texas, and Florida due to market pressures on textbook manufacturers, there is the potential for similar effects of legislation in these states on regulations elsewhere. The adoption of watermarks by AI companies is an example of the outsized influence of certain states (like California).

While larger tech firms can likely handle the ramifications of a variety of regulations in different states, it is smaller tech firms and startups that will have to remain vigilant and updated about the latest in state regulations related to the AI technologies that they work with. Whether it’s thinking about whether to employ an AI chatbot in the healthcare industry or whether or not marketers need to watermark images that they create in AI, smaller companies will need to monitor and work together to be sure of when new regulations may appear and what their ramifications are for their work.

AI regulation, at both state and federal levels, is complex for communicators and advertisers. Awareness of state regulations is essential. Most importantly, beyond legal boundaries, ethical and reputational concerns are paramount. AI may be expedient for refining work and gathering information, but public use, such as sharing manipulated images, can damage a brand’s reputation as well as invite legal challenges. As major brands have already demonstrated, the technology is a powerful tool when used correctly.

The secret to navigating these regulatory and reputational risks? Total transparency.